Anyone building or modernising a Security Operations Centre will eventually reach the same conclusion: the quality of the data layer determines the quality of everything built on top of it. Detection, response, automation, reporting — it all stands or falls on how well security data is collected, normalised, and made accessible.

And yet, in many SOC projects, the data layer is still treated as an afterthought. Organisations invest heavily in SIEM technology, detection engineering, and playbook automation, while the underlying data foundations remain fragile. The result: high storage costs, slow queries, detection rules that constantly break due to inconsistent log formats, and analysts spending more time troubleshooting data problems than responding to actual threats.

Why is the data layer so complex?

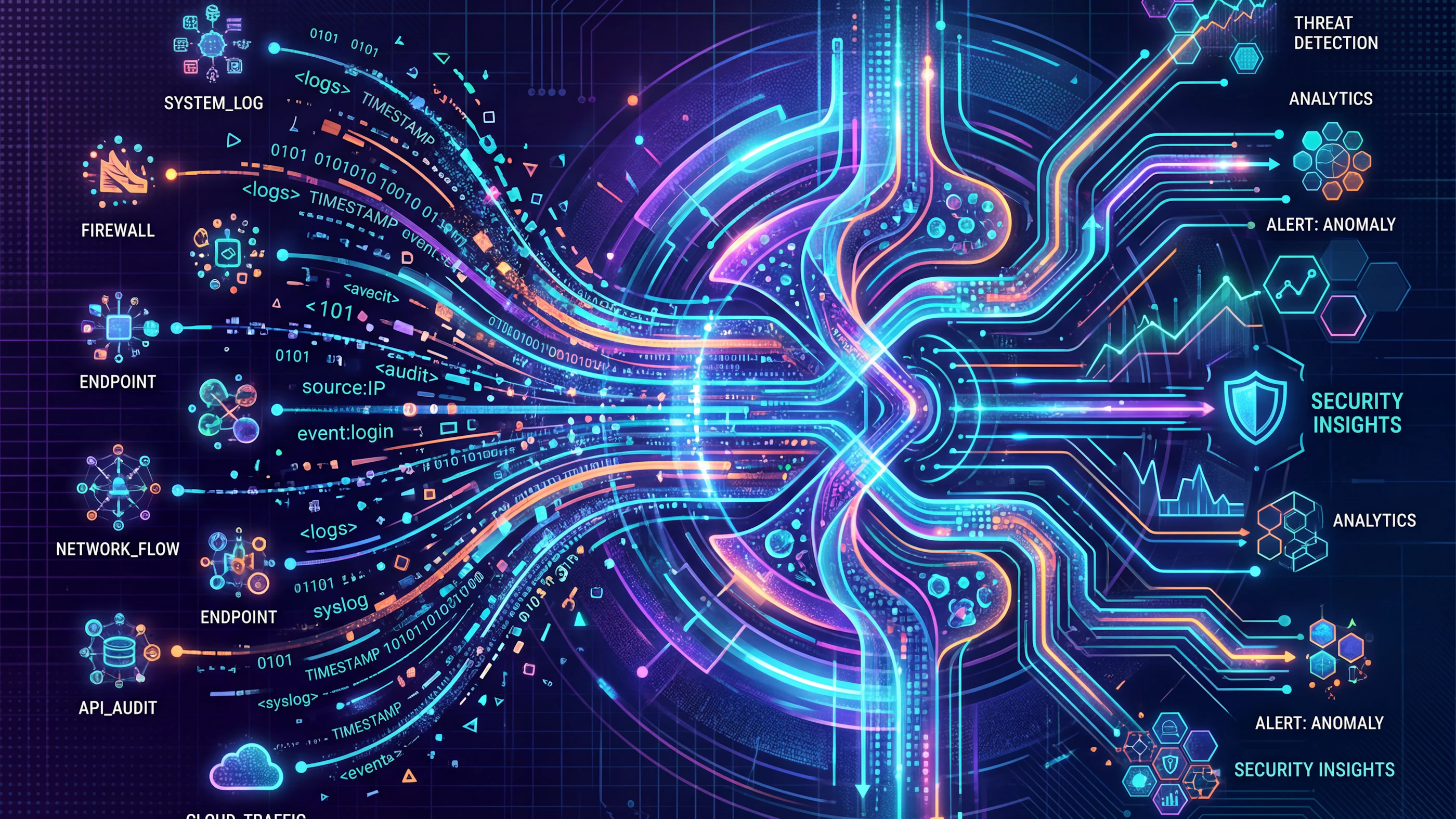

Security data is inherently diverse, noisy, and voluminous. Firewalls, endpoints, cloud environments, identity systems, applications — every source speaks its own language, delivers its own formats, and generates volumes that can quickly reach hundreds of billions of events per day in larger organisations.

The challenge has three dimensions:

Volume and cost. Most organisations cannot afford to store everything indiscriminately. Intelligent tiering — separating hot data for real-time detection from cold data for forensic investigation — is not a luxury, but a necessity.

Normalisation and quality. Raw logs are to detection engineering what raw materials are to manufacturing: the end product is only as good as the processing. Inconsistent field names, missing timestamps, or incorrectly classified events undermine even the best detection logic.

Observability of the data itself. Do you know what is flowing through your pipeline? How many events are being dropped? Where are you introducing latency? Without visibility into the data pipeline itself, the SOC is effectively a black box.

A market in motion

The recognition that data layer architecture is a discipline in its own right has given rise to a growing ecosystem of specialised tooling. Where traditional SIEM platforms long tried to keep everything under one roof, we now see a clear shift towards layered architectures.

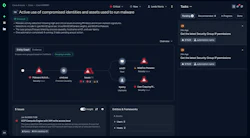

On the SIEM and XDR side, Palo Alto Networks XSIAM is a platform that takes this challenge seriously. XSIAM is designed as an integrated security operations foundation: it combines data ingest, normalisation, detection, SOAR automation, and case management in a single platform, with an explicit focus on the underlying data architecture. The scalable storage, normalisation via XDIM (its native data model layer), and the native integration with Cortex XSOAR — the platform on which Nomios runs its own SOC — make XSIAM a coherent choice for organisations seeking genuine control over their entire security data lifecycle.

At the same time, there is growing attention for observability platforms built specifically for the scale and character of security telemetry. Chronosphere is a notable player in that segment: the platform was originally designed for cloud-native observability at extreme volumes, with a strong emphasis on cost control and selective data retention. The recent acquisition of Chronosphere by Palo Alto Networks is telling. It signals that the market recognises data layer observability as an integral part of the security operations stack — and that the boundaries between observability and security are continuing to converge.

Other relevant players in this space include Cribl, which has established itself as a leading data routing and transformation layer between source and SIEM. Cribl Stream offers granular control over which data goes where, making it possible to manage costs without sacrificing detection coverage. Also worth noting is Elastic Security, which — rooted in its open-source origins — offers a flexible, scalable data stack that is widely used in hybrid environments as an alternative or complement to traditional SIEM platforms.

What does this mean for SOC architecture?

The implications are concrete. A modern SOC architecture requires not only a choice of SIEM or XDR platform, but also an explicit strategy for:

- Data ingest and routing: which sources, which volumes, which filtering at the source?

- Normalisation: is data processed against a consistent data model before being used in detection rules?

- Tiering and retention: what is retained for how long, where, and at what cost?

- Observability of the pipeline itself: is it clear what is flowing, what is being lost, and where delays are introduced?

At Nomios, we see this in practice every day. We support clients in transitioning to — and implementing — XSIAM as the next-generation security operations platform. The integration of Chronosphere within the Palo Alto ecosystem aligns with our conviction that data layer maturity is a prerequisite for effective detection and response, not a by-product of it.

Conclusion

Choosing a SOC platform is less and less a choice for a monolithic product, and more and more an architectural decision about how data flows, is processed, stored, and made accessible. Organisations that take that layer seriously — with the right tooling, the right normalisation strategy, and the right focus on observability — lay a foundation on which detection and response can genuinely perform.

Want to know how Nomios helps organisations design and implement a robust SOC data layer? Get in touch to start the conversation.